What, Exactly, is Entropy?

Entropy is a bit of a buzzword in modern science. Usually it is used as a synonym for “disorder,” but it is so much more interesting than that.

Entropy is a bit of a buzzword in modern science. Usually it is used as a synonym for “disorder,” but it is so much more interesting than that. The concept itself has a long and interesting history. In order to fully understand what entropy is, we really need to see where it came from.

The very first hint at the concept of entropy was given by Lazare Carnot, most notable for his work studying engines and leading the French Revolutionary Army. Lazare was very interested in the relation between work put into a system compared to work that comes out of it. He named this output “useful work,” while calling the lost work “transformation energy.” This would later be known as entropy.

His son, Sadi Carnot, continued the work of his father studying engines. He is the creator of the famous Carnot Cycle, a cycle which acts as the upper bound of efficiency for classical thermodynamic engines. Sadi realized that some energy must always be lost in its conversion from heat to work. This is another way of stating the second law of thermodynamics, which essentially says that entropy can never decrease.

A number of other scientists then morphed this concept to fit into their respective fields. Rudolf Clausius was the first to formally call it “entropy” and made use of it while studying heat. He chose this work based on the Greek word “entropia,” meaning transformation. Ludwig Boltzmann relied heavily on entropy in his development of statistical mechanics. Erwin Schrödinger related mutations in evolution to growth in entropy. Most recently, Claude Shannonbased his entire founding of Information Theory on the concept on entropy.

With all these different fields and uses, it’s no wonder how ambiguous entropy has become. I can remember encountering several definitions and equations related to entropy throughout my undergraduate studies, all seemingly unrelated. The only common theme seemed to be that of randomness.

Let’s go through entropy and see how it has been used in each of these fields. We already talked a little bit about in the sense of thermodynamics, but we can go deeper. A good way to think about entropy is that is measures how spread outenergy has become. With this concept in mind, the second law of thermodynamics simply states “energy will always become more spread out with time.”

Hopefully you see how vague this law is, and it kind of has to be. There is no exact function telling us how energy will move, and we also have no concrete definition of “spread-outness.” I’ll give some examples of the second law at work, and hopefully you can understand it.

If we leave a hot pan out, without any heating applied, it will gradually cool off. This is because the heat energy in the pan is slowly spreading to nearby molecules. Over time, this energy is becoming much more evenly distributed. Think about ice cubes floating in water. The molecules within the ice contain less energy than the molecules in the liquid water. However, over time the ice will melt and the energy from the water spreads into ice.

Entropy is central to the spontaneity of a process. If a process has an increase in entropy (such as the two situations described above) then it will happen on its own. If a process has a decrease in entropy, like ice randomly freezing in a cup of room-temperature water, then it will not happen unless an outside force (a freezer) acts on it.

It’s important to note that entropy is not the same as disorder. A common analogy for entropy is comparing a messy room to a neat one. However, the energy “spread out” the same amount in both cases. It is incorrect to say one room has more entropy than the other. There’s a lot going on here, and this pagegoes into much more detail if you’re interested.

The thermodynamic concept is the original, and perhaps the most precise definition of entropy. It’s only going to get stranger from here!

Next we come to statistical mechanics, a field that has a lot in common with thermodynamics, but is not the same!

A central concepts in statistical mechanics is the difference between macrostates and microstates. A macrostate is the larger, observable system with properties such as temperature, pressure, and volume. It contains many small molecules, but we can largely ignore those and only think about the system as a whole. The microstate, however, does consider each individual particle and describes the exact location of each within the macrostate. Any given macrostate may have trillions of different possible microstates that are possible.

In this format, entropy is defined as the logarithm of the number of possible microstates. Since it’s impossible to know how exactly how many microstates are possible, this is more a conceptual than practical. In the very basic sense, imagine a box of gas with the walls of the box suddenly removed. The gas will spread out, increasing the volume that these gas molecules are in. Since there is more room, there is an increased number of microstates available and entropy has increased.

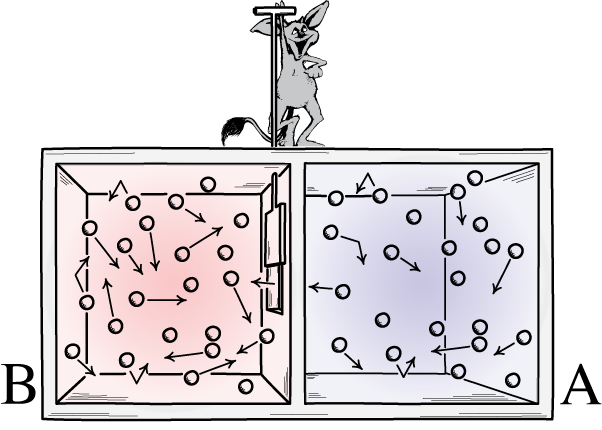

There is a fascinating thought experiment is called Maxwells’ Demon thatwas proposed by James Maxwell. In it, he describes a possible violation of the second law which brings up a lot of interesting discussion.

The final major use of entropy occurs in Information Theory. This definition is signficantly different than the others. Information Theory deals with transferring information from a source to a recipient.

Say you wanted to send a friend what letter grade you got on a test, this could be A, B, C, D, or E. There are only 5 possible messages you could send, which makes the entropy value low. What if, instead, you want to send the exact score you got such as 88.2 or 73.4. This has way more possibilies, and would have a higher entropy value.

You can hopefully see the connection between this concept and the statistical mechanic view of entropy. The entropy of a communication has many implications toward how the signal will be sent. A high-entropy signal requires a lot of information, while a less surprising signal is easier to send.

Entropy has found its way all over the mathematical and physical worlds. While thinking of it as “disorder” is useful, it removes a lot of the nuance involved. Hopefully you’ve gained a grasp on the different manners of thinking about entropy and the benefits to be had.

Thanks for reading! Leave a comment if you have any thoughts or questions about this article.