The “Well-Posedness” of Differential Equations: the Sense of Hadamard

An overwhelmingly large portion of our modeling of the universe is accomplished by posing and solving differential equations..

An overwhelmingly large portion of our modeling of the universe — the enterprise which we call physics — is done by posing and solving differential equations. Somewhat strangely, equations which have been developed by certain physicists to describe very specific phenomena regularly turn out to successfully model completely different phenomena, often in entirely different fields of study. The equation for the motion of a spring, for instance, is so ubiquitous that it has lead people to exclaim (only half-jokingly) that everything is a harmonic oscillator.And it was this state of affairs, that differential equations seem like skeleton keys that open every door in physics, which famously led Eugene Wigner to write about what he called the unreasonable effectiveness of mathematics in the natural sciences [1].

In order for a differential equation to be reliably useful, it must be set as what mathematicians call a well-posed problem. The purpose of this piece is to describe this concept, examine its historical origins, and to explore some related philosophical questions about how mathematics relates to the physical world.

Well-Posedness

To begin with the basics, a differential equation is an equation involving a

function and its derivatives. When we solve algebraic equations the solution — the thing we are looking to plug in which makes the equation true — is generally a number. For differential equations, the solution is a function, say u: D→ ℝ. When the function u has only one independent variable, then its domain D is a subset of the real numbers, and the equation is an ordinary differential equation (ODE). If u has n independent variables, then the domain is a subset of the space of multidimensional real numbers (or n-tuples of real numbers, ℝⁿ). Then the equation may contain partial derivatives with respect to multiple variables, and will then be a partial differential equation (PDE).

A problem in differential equations is said to be well-posed if:

(1) A solution exists;

(2) That solution is unique;

(3) The solution changes continuously with changes in the data.

Let me describe these conditions in turn. The first condition is almost trivial; it merely reflects the fact that it is possible to pose problems of differential equations in such a way that no solutions exist. Naturally, one would like at least one solution to their problem to exist.

The second condition is a bit more interesting. Differential equations which satisfy criterion (1) will in general have many, in fact infinitely many, solution functions. Let us verify this with an example (in fact, the simplest example of an ordinary differential equation):

Anyone who has taken high school level calculus will immediately recognize that the solution to the above equation is u = c, where c is any real number (i.e., c ∈ ℝ). Of course, the set of real numbers ℝ is uncountably infinite, and therefore there are uncountably infinitely many functions which satisfy the above equation. If we were solving this ODE in order to find the value of some physical quantity (for example, the position of a particle in terms of its velocity), this would not be of much help. When you need the answer to a real life question, having infinitely many solutions to a problem is about as useful as having none at all! Hence it is necessary to supplement a differential equation with a number of auxiliary conditions in order to ensure uniqueness of the solution. These are often called “boundary” or “initial” conditions because they are often given as data at the boundary of the domain of the problem, or at the start of a problem that proceeds through time (which can be considered the boundary of the time domain!). In order to resolve this problem with our simple toy example above, it is necessary to provide some initial data at any point on the number line. If, for example, we also suppose that u(0) = 3, then there is suddenly a unique solution to the problem, namely u(x) = 3. If this seems trivial, it is because of the simplicity of our example; ensuring the uniqueness of solutions to a problem becomes much more difficult for problems involving higher orders of derivatives, and even more so for PDEs.

The third criterion listed above can be even harder to prove. Suppose that criteria (1) and (2) hold; you have a solution to your problem, and you are sure that it is the only one! Criterion (3) holds if, when you make a small change to the initial or boundary conditions, your solutions changes only by a small amount. Of course, “small” is a relative term. When mathematicians say small, they normally mean “arbitrarily small,” or as small as you could wish; give me something small, and this thing is smaller! This makes sense, because once you fix a number, you can always find one smaller than it. This type of smallness is similar to the kind that comes up in the definition of limits and of the continuity of a function. In fact, that is what criterion (3) is saying: if you make your boundary data vary as a continuous variable, the corresponding solution must vary continuously.

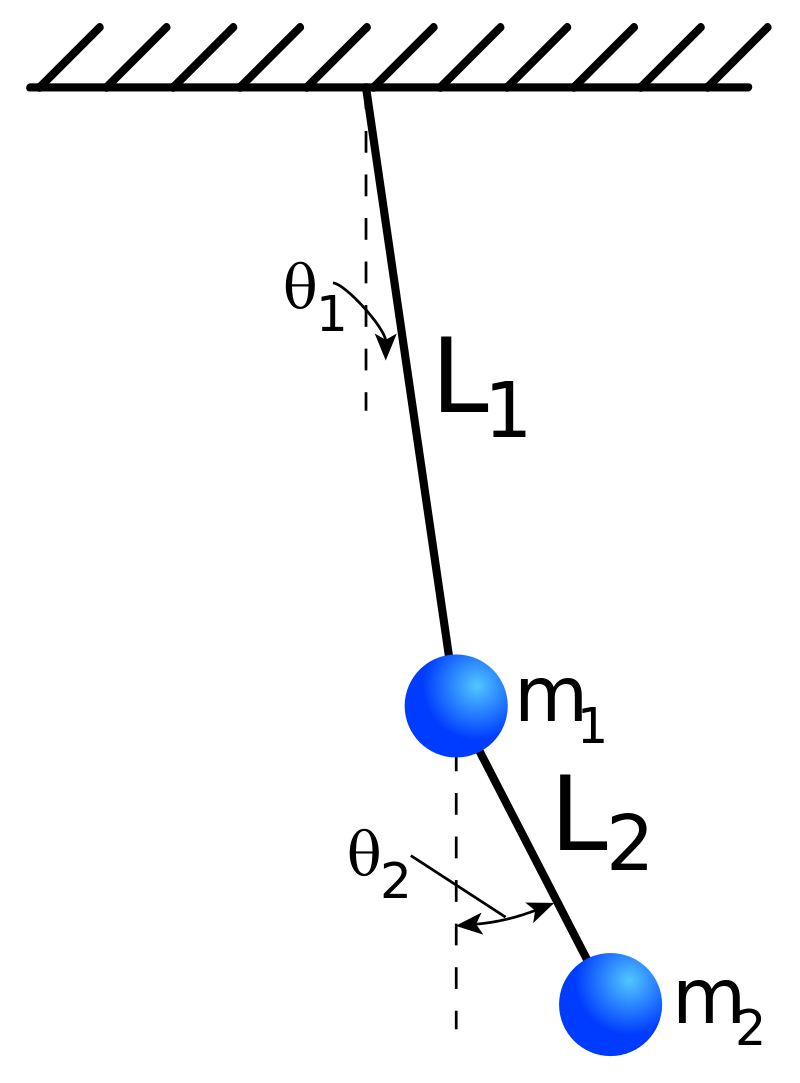

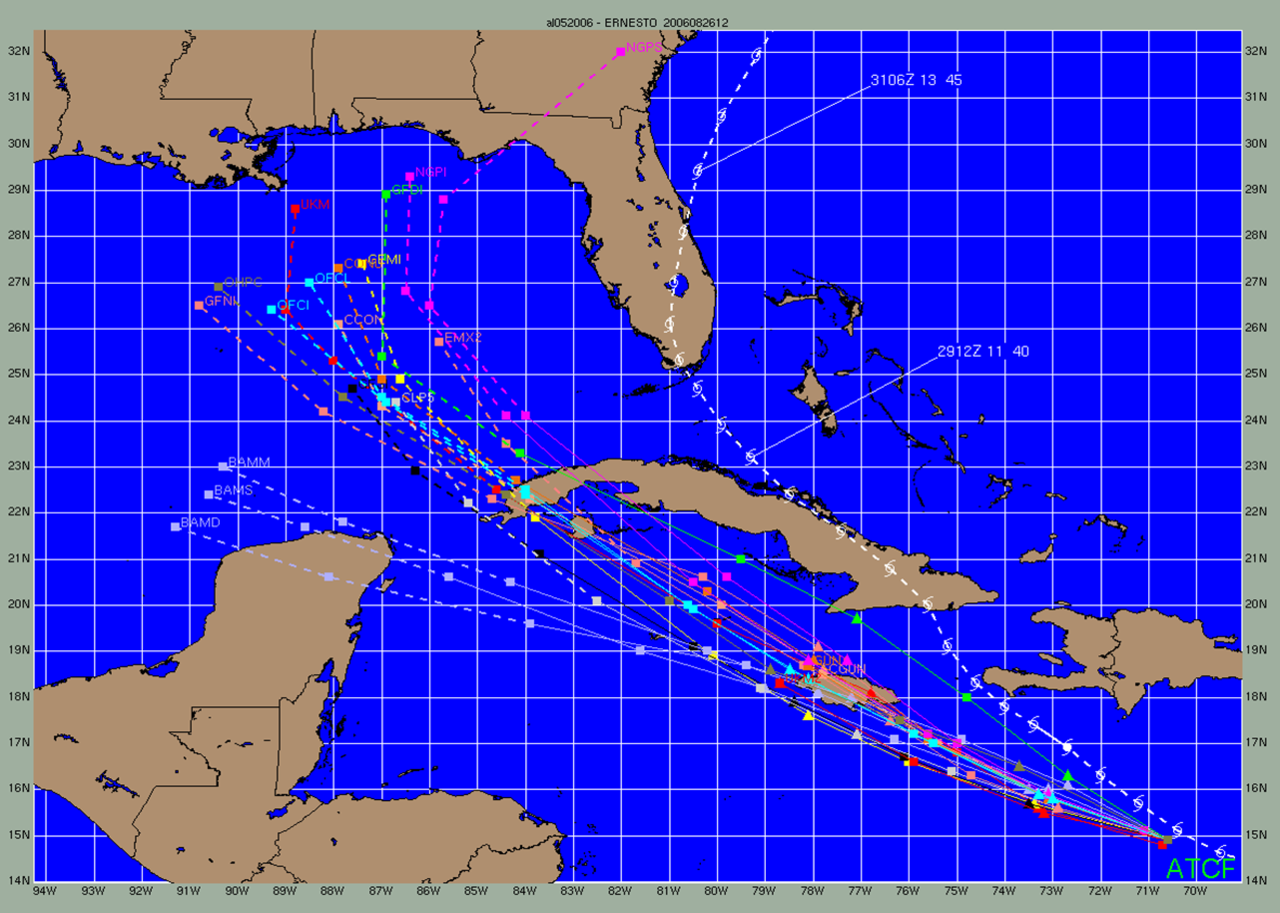

Our simple example of an ODE from earlier clearly satisfies this criterion. In fact, the solution varies exactly as the initial data; if we assume that u(a) = b with a,b ∈ℝ, then u(x) = b for all x. However, there are cases where one can formulate a problem, find a solution, and prove that is unique, but in which (3) does not hold. Consider the famous double pendulum problem. It is governed by a relatively elementary system of differential equations and is mechanically simple; you could perhaps construct one at home. The equations are easily solvable through time and you can prove that the solution is unique. However, the system is a prime example of chaos. It is so sensitive to small changes in initial conditions that you could not make the pendulum follow the same path twice no matter how precisely you tried to drop it from the same location. And yet the system is so simple; this problem proliferates when studying physical systems of increasing complexity. One can see this in weather models, where a small change in the current state can lead to entirely different weather patterns predicted next week. This has become colloquially known as the butterfly effect.

This uncertainty has real world repercussions. Anyone living in Miami or

New Orleans knows the feeling of looking at the forecast of a hurricane a week from landfall, with possible paths varying from Texas to the Carolinas. The possibility that a model might exhibit this type of chaos is particularly daunting in applied physics models, as the initial/boundary data must be obtained through empirical measurements which necessarily imply some amount of error. If (1)-(3) hold for your model, then the error in your prediction shrinks with the error in your measurements. Lacking criterion (3), any amount of error in the initial data, no matter how small, can imply an arbitrarily large error in your predictions. This error cannot be removed; having a unique solution to the problem becomes almost useless in describing what the system will actually do! One may now begin to see the importance of well-posedness of a mathematical problem and the implications to physical prediction.

Enter Hadamard

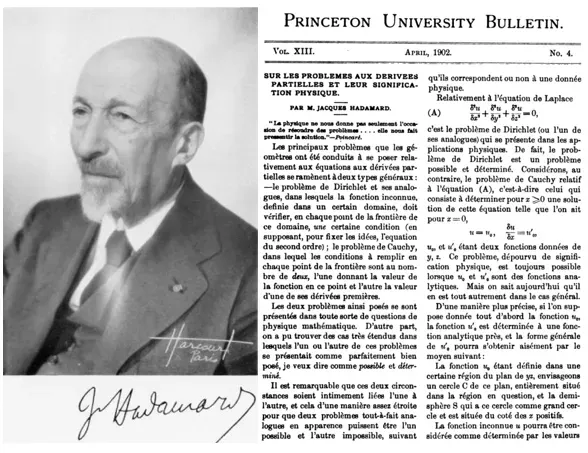

This conception of a well-posed problem was initially formulated and popularized by the mathematician Jacques Hadamard (1865–1963). Hadamard worked deeply in the field of PDEs, which can be said to be generally more difficult to deal with that their ordinary counterparts. He wrote a very important book on the subject which has been translated to English from French [2], and in which he specifies the criterion for well-posedness. However, one of the earliest references to the concept appears to be the 1902 paper [3] Sur les problemes aux derivees partielles et leur signification physique, or “On the problems with partial derivatives and their physical significance”. Hadamard’s 1902 paper analyses some important classical problems in PDEs and suggests some very interesting philosophical questions; I will review it here. Being unable to find an English translation of this paper, I translated it myself. I am absolutely no expert in the French language (I am barely a novice), so take any translations I may give with a grain of salt!

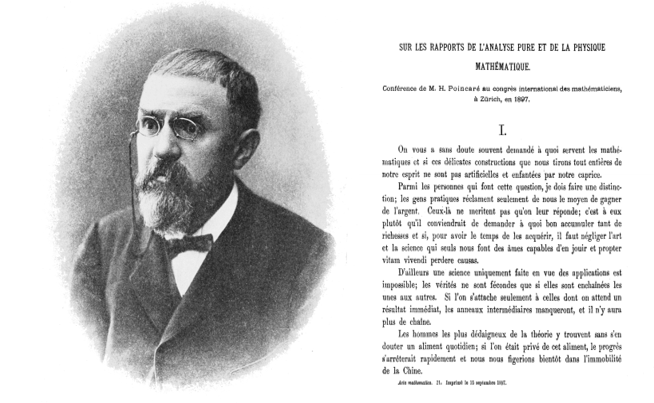

The paper opens with a quotation from Henri Poincare (1854–1912), a polymath who is sometimes referred to as “the last universalist,” being one of the last people who could understand and contribute to all the subfields of mathematics and physics that existed during his lifetime. The quotation reads as follows:

La physique ne nous donne pas seulement l’occasion de resoudre des

problemes…elle nous fait pressentir la solution.

Physics does not only give us the occasion to solve problems…it

makes us anticipate the solution.

This quotation comes from a talk given by Poincare at the International Congress of Mathematicians in Zurich in 1897 [4], and ultimately became chapter five of his book “La valeur de la science” or “The value of science”. Poincare in fact wrote three books on the philosophy of science and mathematics towards the end of his life, all of which have been translated to English [5]. In my humble opinion, these books should be considered necessary reading for anyone interested in the sciences. In the talk from which the above quotation is derived, Poincare sought to analyze the ways in which pure mathematics and mathematical physics were mutually supportive. He describes the intimate interpenetration of the two fields: how mathematical analogies suggest discoveries to physicists long before they are experimentally verified, as had occurred in Maxwell’s electromagnetic theory before Poincare wrote, and would occur again in the Einsteinian revolution to follow. But the usefulness of analogy goes both ways; the field of physics suggests pure mathematical problems, especially in the field of differential equations, which may otherwise never have bee pursued or solved. To quote again from Poincare [5]:

The theory of partial differential equations…has been developed chiefly

by and for physics. But it takes many forms, because such an equation does not suffice to determine the unknown function, it is necessary to adjoin to it complementary conditions…If the analysts had abandoned themselves to their natural tendencies, they would never have known but one…there are a multitude of others which they would have ignored. Each of the theories of physics, that of electricity, that of heat, presents us these equations under a new aspect. It may therefore be said that without these theories, we should not know partial differential equations.

Hence physics provides mathematicians with problems to solve, particularly

in the case of boundary conditions for PDEs. We most often try to solve those problems where the conditions have physical meaning, like when the value of the function at the boundary correspond to a distribution of electric charge or heat. And the fact that the same differential equation can apply to many different physical scenarios means that physics problems that have already been solved can suggest to us the answer to mathematical problems that we are working on! This is the meaning of the quotation that sets the tone for Hadamard’s paper.

Beginning as if to directly continue Poincare’s point, Hadamard distinguishes between two different types of boundary conditions which are often applied to PDEs. The first, and perhaps most natural and common, is the so-called Dirichlet condition, in which the values of the solution function u are prescribed on the boundary of the domain. The second is the so called Cauchy problem, where conditions on both the function u and one of its first partial derivatives are given. In Hadamard’s words:

Les deux problemes ainsi poses se sont presentes dans toute sorte de

questions de physique mathematique. D’autre part, on a pu trouver

des cas tres etendus dans lesquels l’un ou l’autre de ces problemes

se presentait comme parfaitement bien pose, je veux dire comme

possible et determine. Il est remarquable que ces deux circonstances

soient intimement li´ees l’une a l’autre, et cela d’une maniere assez

etroite pour que deux problemes tout-a-fait analogues en apparence

puissent etre l’un possible et l’autre impossible, suivant qu’ils correspondent ou non a une donnee physique.

The two problems thus posed present themselves in all sorts of questions in mathematical physics. On the other hand, it has been possible to find very extensive cases in which only one or the other of

these problems presents themselves as perfectly well-posed, by which

I mean as possible and determined. It is remarkable that these two

cases are intimately linked together in a narrow enough manner so

that two problems, quite similar in appearance, maybe between the

one possible and the other impossible, depending on whether or not

they correspond to physical information.

By “possible and determined” Hadamard means criteria (1) and (2) from our earlier discussion, namely that the solution exists and is unique. For a given differential equation, one type of boundary condition may yield a well-posed problem, while the other may not. Hadamard is suggesting that the well posed problem will be the one which corresponds intuitively to a physical scenario!

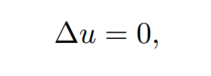

Hadamard’s first example is fittingly the Laplace equation, the solutions of

which are called harmonic or potential functions. In three spatial dimensions (x, y, z), this equation can be written as

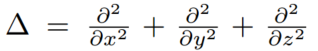

where

is the so-called Laplacian differential operator. Thus a harmonic/potential function is one for which the sum of its second partial spatial derivatives is zero.

The Laplace equation serves the role for PDEs what the harmonic oscillator is for ODEs; it is ubiquitous across mathematical physics. It is the perfect example of the phenomenon that Poincare was talking about. Being present in the theories of gravitation, electromagnetism, hydrodynamics, elasticity (the list goes on!), it serves as a bridge between otherwise disjointed fields, suggesting strategies and solutions to problems throughout science which might otherwise never had arisen. (A quick aside to share the example closest to my heart: when Heinrich Hertz first formulated the laws of contact between solid elastic bodies[6], an analytical solution to the problem presented itself immediately from equations formulated in the field of electromagnetism, which Hertz is perhaps more famously affiliated with. Decades later, when Hertz’ contact law was generalized to include tangential motion and friction [7], the necessary potential function was borrowed by Mindlin from the theory of hydrodynamics [8].)

Hadamard analyses the Laplace equation when its domain is a so-called

“half-space”, which can be thought of as 3D space cut in half, so that while y

and z range from negative to positive infinity, you consider only positive values of x. The boundary of the domain, on which the auxiliary conditions must be set, is therefore the infinite plane x = 0. The reader can look to Hadamard’s paper [3] for the details of the analysis; what matters here is that the Dirichlet problem in this context, which comes up over and over again across physics, is always well-posed and admits a well-known solution. However, the Cauchy problem of the Laplace equation on a half-space is often ill-posed, in that a solution can only be found under very strict conditions. Hadamard also notes that this problem is “depourvu de signifiation physique”, i.e. devoid of physical meaning.

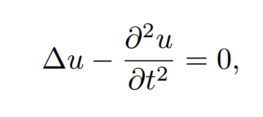

Hadamard’s second example is “l’equation du son,” or the equation of sound, by which he means the three-dimensional wave equation:

where the Laplacian operator is defined as above. This PDE therefore has a

solution that is a function of four independent variables, the spatial variables x, y, z and the temporal variable t. Thus the domain of the wave equation is a subset of ℝ⁴. It models the propagation of waves (sound or otherwise) in three-dimensional space through time. There are therefore more options for the types of boundary (or initial) conditions that one can apply, even while remaining within the context of Dirichlet and Cauchy problems.

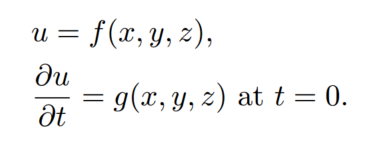

Hadamard’s conclusions about the wave equation are therefore more nuanced than those for the Laplace equation, although analogous. For the wave equation, it is the Cauchy problem that naturally arises in the study of physical waves, where the conditions are given as functions at the initial time t = 0:

This problem is well-posed, and has a unique solution given by the Poisson formula. One could image knowing the position and velocity of all points beneath the earth before an earthquake, and therefore being able to calculate the motion of all these points as the waves propagate through time. Simply knowing the initial position or the velocity would not be enough to determine the problem. Thus the Dirichlet problem for the wave equation is not well posed, but the Cauchy problem as described above is! The case of the wave equation therefore appears opposite to the results from the Laplace equation.

However, there is one small caveat that Hadamard spends a large portion of his paper analyzing. While the Laplace equation is symmetric in all of its variables, the wave equation is not. The position of the variable t is not the same as the spatial variables (x, y, z). One could therefore also ask if the Cauchy problem for the wave equation with boundary conditions

is well-posed or not! To return to our earthquake analogy, this would be like knowing the vertical displacement of the surface of the earth, along with the rate that this is changing at the surface inward, for the whole duration of the event; does this give us enough information to know how everything played out underground? Hadamard spends the second half of his paper proving, with a very elegant bit of classical analysis, that it does not. In fact he proves that if a solution existed, it would have to be unique, but that a solution does not exist! It is my hope that this little teaser will lead some readers to go work through Hadamard’s argument on your own.

A Philosophical Question

Hadamard’s observations lead naturally to questions that are philosophical rather than mathematical in nature. Why would it be that the problems which turn out to be well-posed correspond to physical scenarios, while the ill-posed problems do not? This is akin to Wigner’s question; why does it keep turning out that mathematics is so darn useful? But what Hadamard is suggesting seems even stronger than this: that only those problems which correspond naturally to physical problems are usefully solvable! It seems that there may be some strange alignment between mathematics and nature, where what is physically possible determines what is mathematically possible; or is it vice versa? One is tempted to wax metaphysical. Perhaps god is a mathematician, and these things turn out the way that they do because nature is deeply, truly mathematical in nature. These are the type of non-falsifiable statements which, while beautiful, do not mean much, and have no real place in scientific investigation.

One answer, which was given by Richard Hamming (1915–1998) in response to Wigner [9] but I believe applies here as well, is evolutionary rather than metaphysical in nature. He suggested that we came upon our mathematical models because of natural selection. Nature selected for the minds that could and would naturally arrive at models which reflect nature; this could explain why our mathematics seems so unreasonably useful, and why the well-posed problems in the sense of Hadamard correspond to physical scenarios. Then perhaps our mathematics, rather than being necessary, unique, and eternal, is simply the one that was developed by the minds of a particular group of primates that evolved under certain environmental conditions. It may seem like a logical necessity, but perhaps our logic itself was primed in us by nature in order to keep us alive on our world. It follows that other beings, evolving under different conditions, might develop entirely different mathematics. This position vibes quite nicely with the philosophical position of Conventionalism, which was developed by Poincare in his book Science and Hypothesis [5]; but this topic deserves an article all to its own.

A third option, which I think is quite reasonable, is that things are simply more messy than we (or Hadamard) would like to believe. Our models are always toys, approximations of physical scenarios which are more complicated and have far more variables than we could ever model truly accurately. And while it is true that many well-posed problems correspond to natural phenomena, we have also found large classes of ill-posed problems that we would like to solve for practical purposes. There are numerous international peer-reviewed journals addressing the class of inverse problems, which entail calculating the causes of observations. Can one tell the shape of a drum by hearing its sound? Can one determine the distribution of mass within a planet by measuring its gravitational field? Can we tell how the individual particles in a mass of sand are arranged by measuring the pressure on the boundary? These types of questions are incredibly useful in determining parameters which are difficult or impossible to observe, but they are almost always ill-posed. Things do not seem to be as cut-and-dry as Hadamard suggests.

Further Resources

There is a beautiful and thorough biography of Hadamard which I am just now reading, which does a wonderful job of explaining the depth and breadth of his scientific contributions without spending too much time hashing out the mathematical details [10]. I would recommend it to anyone who would like to find out more about the mathematical giant.

In the first half of this year (the notorious 2020), I was teaching a course on differential equations to graduate students in engineering. When COVID-19 put a premature stop to our lecture meetings, I made a series of video lectures which serve, I hope, as a decent introduction to the theory of partial differential equations. Many of them provide elementary overviews of topics discussed directly in this essay. I link them as follows:

References

- Hadamard, J. (1923). Lectures on Cauchy’s Problem in Linear Partial Differential Equations. Yale University Press.

- Hadamard, J. (1902). Princeton University Bulletin 14(4): 49.

- Hamming, R. (1980). The American Mathematical Monthly 87(2).

- Hertz, H. (1882). On the Contact of Rigid Elastic Solids and on Hardness. Chapter 6. Assorted Papers by H. Hertz.

- Lamb, H. (1932). Hydrodynamics. Cambridge University Press.

- Mazya, V. and Shaposhnikova, T. (1999). Jacques Hadamard, A Universal Mathematician. American Mathematical Society, London Mathematical Society.

- Mindlin, R. (1949). Journal of Applied Mechanics 16: 259.

- Poincare, H. (1897). Congres International des Mathematiciens. Zurich.

- Poincare, H. (2001). The Value of Science: Essential Writings of Henri Poincare. The Modern Library, New York.

- Wigner, E. (1960). Communications on Pure and Applied Mathematics 13(1).