Intuition, Complexity and The Last Paradox

AI since Artistotle (Part 3)

hat happened when there was neither direct evidence nor any eye witness in an ancient Greek court? A juror could not rely on Aristotlelian logic, since neither the acussor nor the accused used logic to reveal the truth; rather, they used it to make their case. Aristotle described an example in the following case, paraphrased from (Aristotle ‘On Rhetoric’: A Theory of Civic Discourse, 1991):

A small man X accuses a larger man Y of assaulting him. A juror asks X a hypothetical question: what if it was you who had started the fight?

- X could argue that a smaller and weaker man would be “impossible” to start the fight, for he would “certainly” be painfully hurt.

- On the other hand, Y could counter-argue that he, being visibly so much larger and stronger than X, is “unlikely” to start the fight for he would “certainly” look bad in court.

The jury needed to decide which side of “reasoning” was more “plausible”. Interestingly, this kind of reasoning shares the same principles as what mathematicians employ to solve a problem.

George Pólya: Intuition as Plausible Reasoning

George Pólya, 1887-1985, a Hungarian mathematician and the father of problem solving in modern mathematical education, believed that plausible reasoningwas what was behind the mathematical intuition, in line with Poincaré’s idea about logic and intuition. He was popularly quoted:

The result of the mathematician's creative work is demonstrative reasoning, a proof; but the proof is discovered by plausible reasoning, by guessing (Polya, 1954).

Instead of superficially praising the genius of the great mathematicians, admiring their hunches, or perhaps envying their luck, Pólya used a handbook of plausing reasoning to demystify their art of guessing. The diagram below shows how beginning with a general triangle, you can follow different plausible reasoning patterns to make an equilateral triangle by specialization, a tetrahedron or polyhedron by analogy, and a general polygon by generalization (Polya, 1954).

Pólya gave “analogy” a special place. He wrote:

Analogy pervades all our thinking, our everyday speech and our trivial conclusions as well as artistic ways of expression and the highest scientific achievements. (Polya, 1973)

Plausible reasoning is applicable to problem solving, as well as all levels of scientific discovery. For example, Turing drew an analogy between the unsovlabiloty of the halting problem and the incompleteness of a formal system, which Gödel’s saw an analogy with the question of whether there are more real numbers than natural ones by Georg Cantor, 1845-1918, German mathematician.

Revisiting Hilbert’s Decision Problem

Now, let’s revisit Hilbert’s decision problem to see what role plausible reasoning, or intuition, might be playing. Since the decision problem was created before the invention of the computer, Hilbert and his colleagues certainly did not know Computer Science. They might have “intuitively” thought that “mathematical intuition” could be replaced by a trivial brute-force search, over all possible proof steps. They almost certainly overlooked the running time of such a search, which turns out to be problematic. To understand this, what if we ask the question differently? Considering that we are interested only in proofs shorter than N (in terms of symbols), we have the “computational” decision problems for different N. There is definitely a solution to such a computational decision problem that simply loops over all possible proofs shorter than N . The issue now changes from possibility to feasibility: is such a brute-force search feasible when N grows big?

This is where computational complexity comes in. According to the Computational Complexity theory, there are two major kinds of running time, polynomial and exponential. Given a problem size N, the running time of the former grows as a polynomial in N, such as 2N, or 3N², and that of the latter grows as an exponential in N, such as 2^N or 3^N. A problem requiring exponential-growing time is considered as “infeasible” since it could force a human inquirer, even if using a computer that consumes all the resources in the universe, to wait for as long as the age of the universe to get an answer.

Human vs. Machine Proving

If there is already a proof, we can write a polynomial-time program to check its correctness. However, this does not mean that a program to “find” a proof is polynomial time. The complexity class of problems that can be solved by polynomial-time programs is referred to as P, while that of the problems that can be solved by “guessing” an answer and checking it in polynomial time are called NP, standing for Non-deterministic Polynomial Time. As we can see, machine proving is in NP. The complexity class P, consisting of all problems solvable in polynomial time, is contained in NP. A problem is assigned a complexity class (such as P or NP) based on the fastest known programs to solve it. Therefore, a problem may change class when a faster program is discovered. For now, the computational decision problem is considered in the NP class. How significant might it be for machine proving if there is a polynomial-time solution?

Gӧdel foresaw such significance before Computational Complexity theory was conceived, and wrote about it in his 1956 letter to John von Neumann (1903-1957, mathematician, physicist, known for von Neumann architecture, the blue print of all modern computers) :

If there actually were a machine with [running time] ∼ kN (or even only with ∼kN²) [for some constant k independent of N], this would have consequences of the greatest magnitude. That is to say, it would clearly indicate that, despite the unsolvability of the Entscheidungsproblem [Hilbert’s problem of giving a complete decision procedure for mathematical statements], the mental effort of the mathematician in the case of yes- or-no questions could be completely replaced by machines. One would indeed have to simply select an N so large that, if the machine yields no result, there would then also be no reason to think further about the problem (Aaronson, 2016).

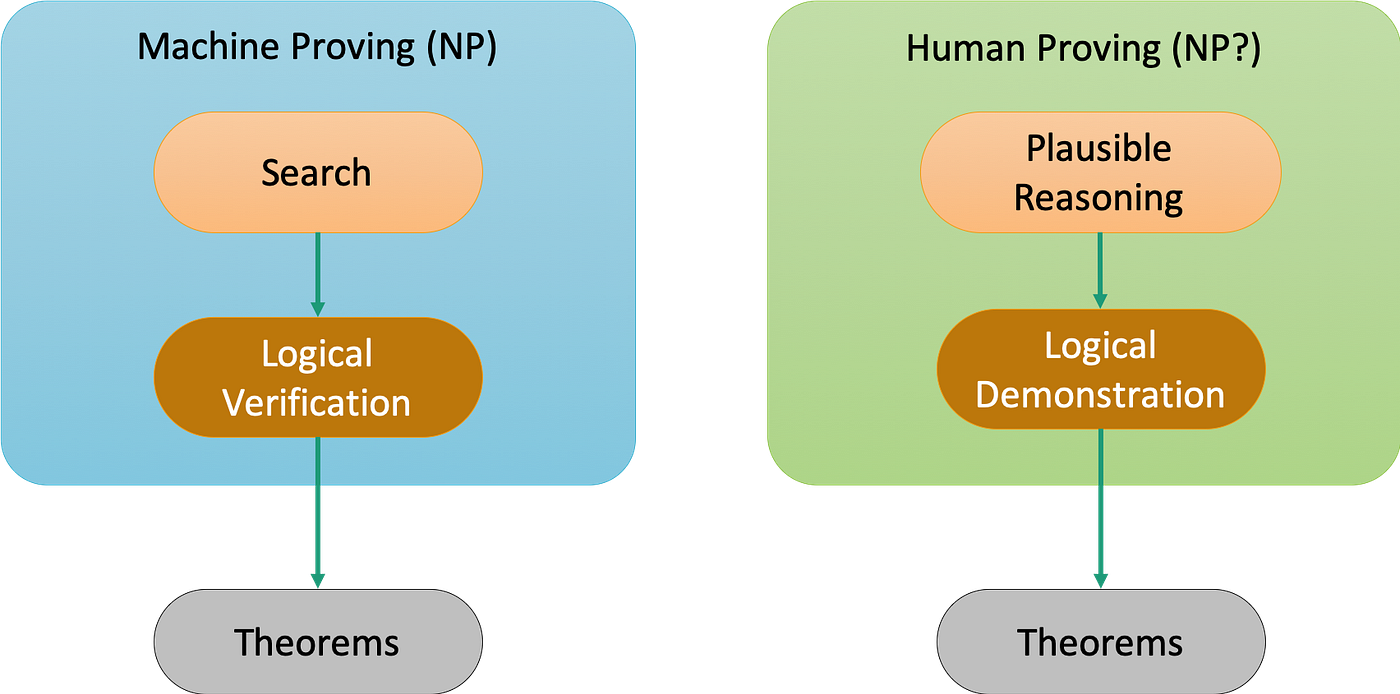

What is the “mental effort of the mathematicians”? According to Poincaré and Pólya, mathematicians solve problems in 2 stages: first, using plausible reasoning, or art of guessing, to form conjectures, and second, using logic to demonstrate the winning conjecture. Similarly, machine proving follows 2 stages: searching for a proof, and logically verifying the proof. Hilbert originally asked if there was a universal proof mechanism to eliminate the necessity of using intuition. Gӧdel effectively rephrased the question to whether there is a polynomial-time “smart” search or “artful” guessing that is equivalent to intuition.

Stephen Cook and Leonid Levin: The One Program to Rule Them All

The Boolean Satisfiability (SAT) problem asks if there is an assignment of 0 (FALSE) or 1 (TRUE) to the variables in a Boolean formula, for exampe, (x1 ∨ ¬x2) ∧ (¬x1 ∨ x2 ∨ x3) ∧ ¬x1, such that it can be evaluated to be 1. Stephen Cook (1939-) and Leonid Levin (1948-), American-Candadian computer scientist and mathematician and a Russian-American mathematician, respectively, proved independently that if the SAT is in P, that is, it has a polynomial-time solution, then “all” NP problems can be reduced to the SAT and be solved in polynomial time. In other words, it would imply P=NP in that all NP problems can be solved in polynomial time!

Deciding if such a super program exists is referred to as the P vs. NP problem, which is perceived as the greatest open problem in modern science. As we can see now, what Gӧdel thought as consequences of “greatest magnitude” is really P=NP. A polynomial-time SAT solver would be the “one program to rule them all”, like the “one ring” in The Lord of the Rings. There are other problems like the SAT to which a solution would imply P=NP. All these problems are collectively referred to as being NP-Complete.

Despite having worst-case exponential time, today’s SAT solvers have been employed to solve many mission-critical problems in mathematics, chip design, and software checking, as well as decision making in a variety of areas. Why are we still concerned with P vs. NP? If the “one program” showed up, and thus proved P = NP, its impact would be as devastating as those of the halting oracle and the universal proof mechanism, if they existed. Mathematical ingenuity and creativity would become insignificant, and mathematicians would no longer need training in plausible reasoning. As a matter of fact, it would even eliminate the entire necessity for the vibrant and exciting research in AI and Machine Learning. In essence, it would be the final nail in the coffin for the mental effort of the mathematicians and computer scientists. Today, it is a common belief that the “one program” does not exist, or P != NP. Similar to Turing’s proof of the non-existence of the halting oracle, it seems that “one program” would be so insanely powerful that proving its non-existence will be easier to prove the contrary, similar to how Turing proved the non-existence of the halting oracle. It turns out that proving P != NP is extremely difficult. Such a barrier between P and NP, at least for now, seems to be like the “guardian of humanity”.

AI vs. Humanity

On the other hand, we are seeing AI research on theorem proving, (Bansal et al., 2019), (Bansal et al., 2020) and (Polu & Sutskever, 2020), and (Selsam et al., 2019). Instead of trying to replace human intuition as what the formalists had hoped for, today’s AI researchers are now trying to make the computer learn how we use intuition. Just as logic is to study how logical reasoning works independent of the contexts in which it is applied, modern AI is to investigate intuition relatively independent of the data it consumes and produces.

The question is, do we want to make AI imitate each of us individually, or all of us collectively, and cross-generationally? To answer this question, let’s take a look at some examples of how we solved difficult problems in the past.

The longest proof, at 200 terabytes, is for the Boolean Pythagorean Triples (BPT) problem, which asks

Is it possible to color each positive integer blue or red in such a way so that no integer triplet a, b, and c that satisfies the Pythagorean equation a²+b²=c² is entirely the same color?

The problem was raised in the 1980s; however, it was not proved until 2016 when Heule et al. showed that up until 7,824, it is possible to color integers in this way, but beginning with 7,825, it becomes impossible (Heule et al., 2016). The proof is so long that it will take a human 10 billion years to read all 200 terabytes, equivalent to all of the digital texts held by the Library of Congress! The mathematicians used “mental effort” to reduce the original 102,300 ways of coloring the integers up until 7,825 to 1 trillion, and then handed the computer the tedious task of going over all of the cases, which required 800 CPU cores running for 2 days.

Regarding the length of time needed to solve a problem, the Four Color Theorem took humans 125 years to prove, and Fermat’s Last Theorem a whopping 358 years!

There might be 2 concerns regarding whether humanity can serve as a “role model” for AI:

- The human provers used computers in their proofs

- The aforementioned problems had been well-studied and popularly-discussed in the mathematics community for decades or even centuries before they were solved.

For the first concern, humans handing over tedious tasks to the computer is just like a program calling subroutines or libraries. Regarding the second concern, if humans can accumulate mathematical knowledge over time, so can AI using the Internet at an orders-of-magnitude faster pace. In fact, one of the reasons for recent breakthroughs in AI is that it consumes and digests the human mind and reflects it back at us.

It appears that instead of trying to make AI a mathematical genius, we are making it follow the collective human mind. However, it remains to be seen if AI can mimic human intellectual collaboration in terms of debates, brainstorming, and exchanging ideas.

The Last Paradox

Let’s recap what we have discussed so far:

- Aristotle established logic for correct reasoning, and envisioned intuition to establish first principles.

- From Aristotle to Hilbert, mathematicians and logicians had advanced logic from mental and linguistic skills to a science of symbolic manipulation.

- Believing logic was the foundation and the only driving force, the formalists strived to “mechanize” mathematics to eliminate the necessity of using intuition and being meaningful (to humans).

- Gödel and Turing showed the formalists’ dream was unachievable in terms of “computability” due to the inevitable self-referential paradoxes in their systems; the computer was conceived as a tool to demonstrate the necessity of intuition.

- The unsolvability of the decision problem could be viewed as infeasible due to an exponential-time brute-force search from the perspective of “computational complexity”.

- In contrast to the formalists' approach to intuition, AI researchers are trying to imitate how humans use intuition to solve problems.

Will AI completely replace mathematical intuition one day? Gödel, a self-confessing Platonist, believed humanity was forever superior to the computer, and could never be replaced by computer programs. He talked about the developing mind of humanity:

Mind, in its use, is not static, but constantly developing, i.e., that we understand abstract terms more and more precisely as we go on using them, and that more and more abstract terms enter the sphere of our understanding.” (Copland et al., 2013, 192)

Apparently, he did not attribute the human superiority over machines to each individual, but to the collective and cross-generational humanity. Paradoxically, if humanity is so superior, why can’t it write thinking programs given sufficient time? The human mind has been continuously evolving with our inventions. We have deemphasized counting with fingers and moved away from calculating with paper and pencils after the invention of the computer. As a matter of facts, we are still at an early stage of discovering our potential to program the computer.

On the other hand, Turing, believed machines could be advanced to eventually surpass humans:

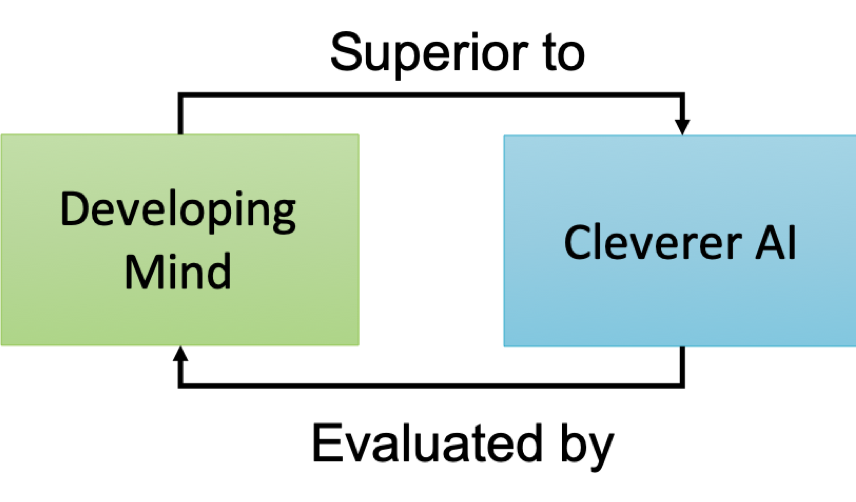

There would be no question of triumphing simultaneously over all machines. In short, then, there might be men cleverer than any given machine, but then there might be other machines cleverer again, and so on. (Copland et al., 2013, 194)

However, Turing believed that humans would be the ultimate judge to decide if machines are cleverer than humans. In his landmark paper Computing Machinery and Intelligence, Turing described the Turing test, in which a computer tries to outsmart a human interrogator to believe it is a human. He proposed that if AI can pass such a test, it should be considered as being able to think.

The self-referential relation between the developing mind and the cleverer AI can be shown below:

On the other hand, what would happen if humanity and AI mutually ascend in such a positive feedback loop? Then, the next question to ask would be: will humanity and AI converge and become a God-like entity as often portrayed in Science Fiction and speculative future predictions? For example, in Issac Asimov’s science fiction short story “The Last Question”, humanity keeps asking Multivac, the global AI computer, the question about how to reverse the second law of thermodynamics to save the universe from the heat death, The story ends with the omnipotent AC, the descendant of Multivac that merges with humanity, igniting the biblical Big Bang of the next universe.

In reality, as we continue advancing AI, we learn more about ourselves according to Turing

The whole thinking process is still rather mysterious to us, but I believe that the attempt to make a thinking machine will help us greatly in “finding out how we think ourselves.”

However, the accuracy that AI predicts must be valued, the elegance of its reasoning must be appreciated, and the extent to which it imitates us must be held in awe. Ultimately, how will the mind stay superior without AI, and who will validate whether AI is cleverer? This is perhaps the last paradox.

Bibliography

- Aaronson, S. (2016). P=?NP. In Open Problems in Mathematics. Springer.

- Aristotle ‘On Rhetoric’: A Theory of Civic Discourse (G. A. Kenndy, Trans.). (1991). Oxford University Press.

- Bansal, K., Loos, S., Rabe, M., Szegedy, C., & Wilcox, S. (2019).HOList: An Environment for Machine Learning of Higher-Order Theorem Proving. Proceedings of the 36th Conference on Machine Learning.

- Bansal, K., Szegedy, C., Rabe, M. N., Loos, S. M., & Toman, V. (2020). Learning to Reason in Large Theories without Imitation. https://arxiv.org/pdf/1905.10501.pdf

- Borel, E. (1962). Probabilities and Life. Dover Publications, Inc.

- Copland, J. B., Posy, C. J., & Shagrir, O. (2013). Computability: Turing, Gödel, Church, and Beyond (Kindle ed.). The MIT Press.

- Heule, M. J. H., Kullmann, O., & Marek, V. W. (2016). Solving and Verifying the boolean Pythagorean Triples problem via Cube-and-Conquer. In Lecture Notes in Computer Science (pp. 228–245). Springer International Publishing. 10.1007/978–3–319–40970–2_15

- Poincare, H. (1969). INTUITION and LOGIC in Mathematics. The Mathematics Teacher, 62(3), 205–212.

- Polu, S., & Sutskever, I. (2020). Generative Language Modeling for Automated THeorem Proving. arxiv.org. https://arxiv.org/abs/2009.03393

- Polya, G. (1954). Induction and Analogy in Mathematics.

- Polya, G. (1954). Mathematics and Plausible Reasoning. Martino Publishing.

- Polya, G. (1973). How to Solve It (Second ed.). Princeton University Press.

- Selsam, D., Lamm, M., Bunz, B., Liang, P., Dill, D. L., & de Moura, L. (2019). Learning a SAT Solver from Single-Bit Supervision. https://arxiv.org/abs/1802.03685