A Theorem of Erdős and Szekeres

A Theorem by two mathematical greats, which anybody, no maths experience required, can understand! An amazing, and beautiful, result…

A theorem by two mathematical greats, which anybody, no maths experience required, can understand! An amazing and beautiful result accompanied by some pretty hand-coded visualisations!

Regardless of your experience with math, this theorem can be understood, and the elegance of the solution appreciated. Throughout I will both use diagrams to explain concepts where possible. Where I use algebra notation like ‘x’ and ‘y’, I will also give concrete examples using real numbers so you can understand what is going on even if you aren’t comfortable with algebra.

Simple diagrams to explain complicated language

Before I state the problem, I want to build some understanding. That’s because the terms can be confusing to people who haven’t done much math, but by taking a little more time we can understand the problem without previous knowledge of any complicated language. If you are familiar with the terms used (in bold), then you can skip to the next section.

Sequence

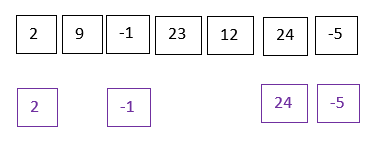

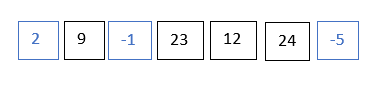

First, we learn what a sequence of numbers is. (1,2,3,4,5) is a sequence of numbers. (2,9,-1,23,12,24,-5) is another sequence of numbers. A sequence of numbers is simply a list of numbers, where you can ask what the first number is, what the second number is, and so on. For example, in the sequence (2,9,-1,23,12,24,-5), the first number is 2, the second number is 9, the third number is -1, the fourth number is 23, the fifth number is 12, the sixth number is 24 and the seventh number is -5. Then the sequence ends.

Subsequence

Second, we learn what a subsequence of numbers is. A subsequence of numbers takes a sequence, and then only looks at some numbers in the sequence. This is like going shopping in a supermarket: you don’t have to take every item in an aisle, you can instead go down the aisle, and take a couple of elements out. For example, a subsequence of (1,2,3,4,5) is (1,4,5); a subsequence of (2,9,-1,23,12,24,-5) is (2,-1,24,-5). In each case, we simply chose a few numbers, from left to right, from the original sequence.

Increasing and Decreasing Subsequence

Finally, we learn what an increasing subsequence, and decreasing subsequence are. (1,3,5) is an increasing subsequence of (1,2,3,4,5), as 1≤3≤5. A decreasing subsequence of (2,9,-1,23,12,24,-5) is (2,-1,-5), as 2≥-1≥-5.

Note that while some subsequences are increasing, and some are decreasing, others are neither. Consider the sequence (1,2,3,4,5,0). Then (1,4,0) is a subsequence which is neither increasing (as 4≥0, as 0 is after 4 in the sequence), not is it decreasing (as 1≤ 4 and 4 is after 1 in the sequence).

To use our supermarket analogy, if you go down the aisle, and every new item you pick is more expensive than the last, then you have picked an increasing sequence. If you go down the aisle, and every new item is less expensive than the last, then you have picked a decreasing sequence. And, finally, if you go down the aisle, and sometimes the next item you pick is more expensive, and sometimes less expensive, then the subsequence you have picked is neither increasing, nor is it decreasing.

Statement of the Theorem

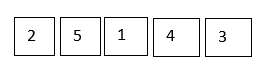

Suppose we have the numbers 1,2,3,…N, but arranged in any order. For example, if N=5, we have the numbers 1,2,3,4,5 and we allow them to be arranged in any order; such as (3,4,1,2,5)

The Erdos-Szekeres theorem tells us that there either an increasing subsequence of length root N, or there is a decreasing subsequence of root N.

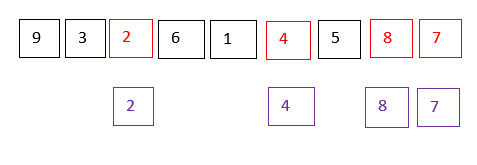

Suppose N = 3² = 9. Then the Erdos-Szekeres sequence tells us that we have the numbers (1,2,3,…,9) arranged in any order, we can either find an increasing subsequence of at least length 3, or a decreasing subsequence of at least length 3. In the sequence (9,3,2,6,1,4,5,8,7) you can check for yourself that this is indeed the case; e.g. (2,4,5,7) is an increasing subsequence of length 4. A visual illustration of this example is below.

What if N isn’t a perfect square? Then we look at the square root rounded up. If N = 50, then the square root of 50 is about 7.07, so we round upwards to 8, and the theorem tells us that we are guaranteed either an increasing subsequence of length at least 8, or a decreasing subsequence of length at least 8. However, we will only look at the case where N is a perfect square because the logic is essentially the same in both cases, just the algebra is a little messier when N is not a square number.

Proof

Part I

For each number in the sequence, consider the longest increasing subsequence which ends at that number, and longest decreasing subsequence which ends at that number.

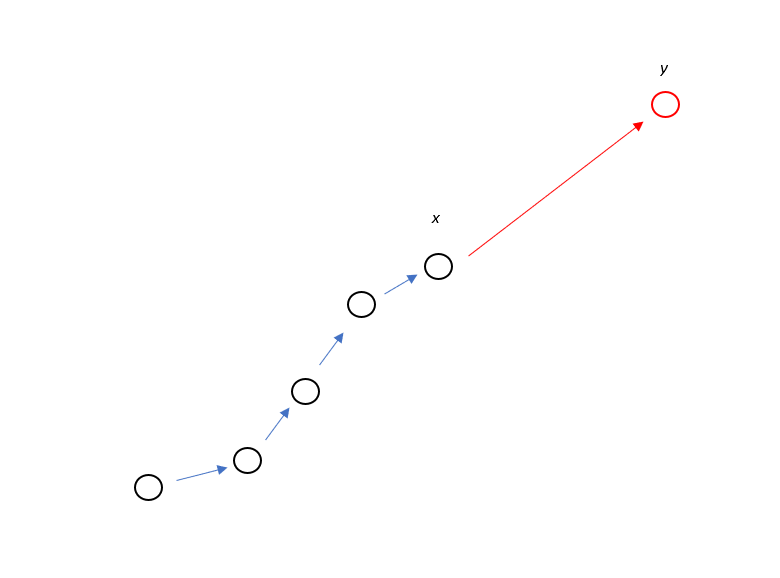

Consider two points in the sequence, x and y, with x earlier in the sequence than y. If x is larger than y, i.e. x>y, then for any decreasing sequence which ends at x, we can make it longer by making it end at y. This remains a decreasing sequence, as y is smaller than x. The diagram below illustrates this

For instance, if x=10, and y=5, the decreasing sequence (16,14,13,11,10) which ends at x, can be made longer by including y on the end, (16,14,13,11,10,5), and this remains a decreasing subsequence. This guarantees that the longest decreasing subsequence ending at y is longer than the longest decreasing subsequence ending at x, provided x>y.

If y is larger than x, i.e. y>x then we get a very similar result. If we have an increasing subsequence ending at x, we can make it longer by including y, which remains an increasing subsequence as y>x. This guarantees that the longest increasing subsequence ending at x is shorter than the longest increasing subsequence ending at y, provided y>x.

Note, either y>x or x>y, as all the numbers in our sequence are different.

We will be using the following notation in the next sections, so please read the next two paragraphs carefully.

Let Inc[x] denote the length of the longest increasing subsequence ending at x, and let Dec[x] denote the length of the longest decreasing subsequence ending at x. We use the same notation for y. E.g. in (1,2,3,0,4), Inc[1] = 4, as the longest increasing subsequence starting at 1 is (1,2,3,4) which is length 4. To help you remember, ‘Inc’ is an abbreviation of ‘Increasing’, and ‘Dec’ is an abbreviation of ‘Decreasing’

It is impossible that both Inc[x] = Inc[y] and Dec[x] = Dec[y]. This is because, if x>y then Dec[x] < Dec[y], and if x<y, then Inc[x] < Inc[y]. This is simply using the logic above, but with less wordy notation. The notation merely says that when x>y, we can create a longer decreasing subsequence ending at y than x, and when x<y, we can create a longer increasing subsequence ending at y than x.

An Interlude for Visualisation

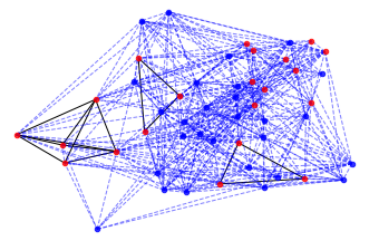

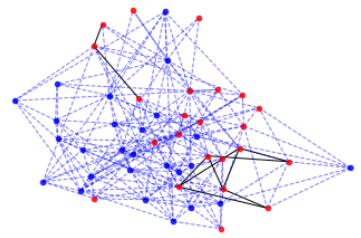

To get a feel for the problem, I wrote some code to visualise what the problem looks like as a graph. Every image represents a different simulation. Code will be released in an addendum piece very soon! (Made using Python and NetworkX and matplotlib)

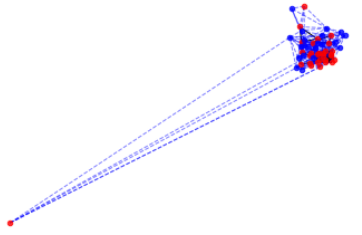

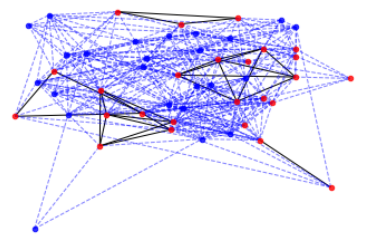

Each ‘dot’ (technically: node) of the graph represents one of the numbers in our sequence. I set n=49=7². (The image at the top was for n=1000)

A connection is drawn between a node x and a node y if (Dec[x]==Dec[y] or Inc[x]==Inc[y]).

E.g. in (4,5,6,1,2,3) we would connect the node labelled (6) with the node labelled (3), as the length of the longest increasing subsequence ending with (6) is length 3, and the length of the longest increasing subsequence ending with (3) is also length 3, so Inc(3)==Inc(6).

We colour a node red if the longest increasing subsequence, or the longest decreasing subsequence, ending at that point, is greater than root N. These red points therefore show us how often the condition of the theorem is met. I.e. a red point signifies the existence of an increasing or decreasing subsequence of length at least root N. Every diagram contains red points!

Next, we thicken some of the edges (‘connections’). We thicken the connections if they are a connection between red dots. This is to give some feel for the density of red dots.

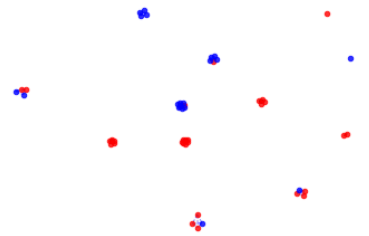

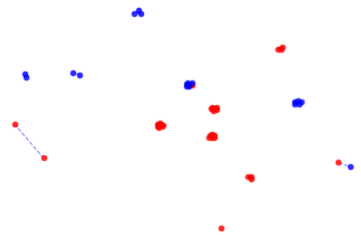

Now, let’s visualise in another way. Now, we only draw edges between two nodes if Dec[x] == Dec[y]. This visualises, for each point in the sequence, how many other points’ longest decreasing subsequence is the same length.

We can do the same for increasing subsequences. We now draw an edge between two nodes x and y if Inc[x]==Inc[y]. This visualises, for each point in the sequence, how many other points’ longest increasing subsequence is the same length.

These two approaches to visualising the problem also highlight two different approaches to solving it. Initially, I focused on the first group of graphs. This looks at how Dec[] and Inc[] relate to each other and to themselves. It seems to contain a lot more information, as can be seen visually by the denser connections. But actually, we only need the approach of the second graphs. The second graphs look at Dec[] and Inc[] independently, and specifically, looks at their clustering. It is this clustering which will hold the key to solving the problem.

This is because limiting the length of the increasing and decreasing subsequences allowed limits the number of clusters, as each cluster represents subsequences of a certain length. And, as you limit the clusters, you force more and more nodes to share clusters. We will see how this plays out!!

Part II

Now, suppose N=k². If the maximum length increasing and decreasing subsequences are less than k, then for all x, Inc[x]≤k-1, and Dec[x]≤k-1

As we have N=k² numbers, that means Inc[1], Inc[2], …, Inc[k²] ≤ k-1, and Dec[1], Dec[2], …, Dec[k²] ≤ k-1.

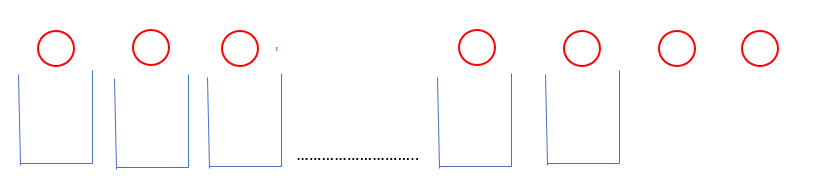

We let each of the values [1,2,.., (k-1)] be represented by a bucket. There is a bucket labelled 1, a bucket labelled 2 and so on. We put Inc[x] in bucket 3 if Inc[x]=3, and put Dec[y] in bucket 11 if Dec[y] = 11. I.e. an increasing or decreasing subsequence can be put in a bucket if the length of the sequence is the same as the label on the bucket.

This will enable us to turn a problem about sequences into one about fitting a large number of items into a small number of buckets.

If N=9, k=3, then we see that if we try and fit k²=9 items into k-1=2 buckets, then one bucket must contain at least 5 = k+2 items.

Now we look at this more generally.

First, we see which buckets Dec[1], Dec[2], Dec[3], … Dec[k²] go into. As there are only (k-1) buckets in total, one bucket must have (k+2) items in it. Why is this so? Suppose you had 9 buckets and 100 items (k=10, n=100). Then, even if you tried to spread out the items as much as possible, eight of the buckets would have 11 items (k+1), and the last bucket would have 12 items (k+2). Alternatively, suppose that every bucket had only 11 items in. Counting over all the buckets, that would give only 99 items, when we know there were 100 items total.

So, we are guaranteed to have a bucket with (k+2) items from Dec[1], Dec[2], … Dec[k²] in it. We now solely look at those (k+2) values whose Dec[]’s fall into the same bucket.

Each Dec[x] has an Inc[x]. However, if Dec[x] and Dec[y] share a bucket, then Inc[x] and Inc[y] cannot share a bucket, as we discovered earlier. However, this means we have to put (k+2) items into k buckets, without any of them sharing a bucket.

This is obviously impossible: to fit (k+2) items into k buckets, at least two items must share a bucket. But then for these two numbers, say x and y, Dec[x] and Dec[y] share a bucket, and Inc[x], Inc[y] share a bucket. But this is impossible, as we found out earlier!

Therefore we can conclude that there must be an increasing subsequence of length k or more, or there is a decreasing subsequence of length k or more. If there was not, there would be too few ‘buckets’ to fit all the subsequences for Dec[1],…,Dec[k²], Inc[1],…,Inc[k²] into!

Afterword: Simplicity and Structure

The first proof of this Theorem was given by Erdos and Szekeres, in 1935. That’s actually quite recent! You normally have to go some way into a mathematics degree before coming across 20th century mathematics!!

I came across it in the wonderful book The Nature of Computation by Moore and Mertens, where it is one of the practice problems. Moore and Mertens give the hint to use Inc(), Dec() and a buckets in hole style argument; without this hint I would have had no chance of solving it. (They use different notation, which unfortunately wouldn’t render well on medium, so I created my own). This approach to the proof was originally thought of by the brilliant mathematician Seidenberg.

These sorts of problems are hard in part because of their simplicity. It is not at all clear what properties of the problem you should use to solve them. I spent a day and a half trying to solve it in different ways, and hitting all sorts of dead ends. When I tried to solve the problem without the hint, I was often using more complicated properties which turned out not to be needed. This is what I tried to convey in the visualisation section — we managed to solve this problem by ignoring a lot of the details!!

These sorts of problems are also very elegant, because they give information about structure which must emerge in an object; it really is mathematics for mathematics’ sake.

P.S. the theorem, in slightly more general form (but the essence of the argument is the same), has its own wikipedia page. You can check out the paper where Erdos and Szekeres published their original proof here